ROS Group 产品服务

Product Service 开源代码库

Github 官网

Official website 技术交流

Technological exchanges 激光雷达

LIDAR ROS教程

ROS Tourials 深度学习

Deep Learning 机器视觉

Computer Vision

xiaoqiang tutorial (17) using ORB_SLAM2 to create a three-dimensional model of the environment

-

Using ORB_SLAM2 to create a three-dimensional model of the environment

To achieve visual navigation, a three-dimensional model of space is required. ORB_SLAM2 is a very effective algorithm for building spatial models. This algorithm is based on the recognition of ORB feature points, with high accuracy and high operating efficiency. We have modified the original algorithm, added the map’s save and load functions, making it more applicable to the actual application scenario. The following describes the specific use.

Note: Since the ORB_SLAM2 version of xiaoqiang has been upgraded to the Galileo version, the runtime detects if a valid certificate is available. Xiaoqiang is configured with a valid certificate. If you do not have a certificate by default, and you can contact customer service to get a cert for free. If you are not a xiaoqiang user, then the following tutorial cannot be performed.Prepared work

Before starting ORB_SLAM2, please confirm that Xiaoqiang’s camera is working properly. When building map with ORB_SLAM2, xiaoqiang has to move around, it is inconvenient to display the ORB_SLAM2 status at the same time (ssh remote operation is not smooth, and it is impossible to drag the monitor while running), please install Xiaoqiang map remote control windows client to get a better experience.

Start ORB_SLAM2

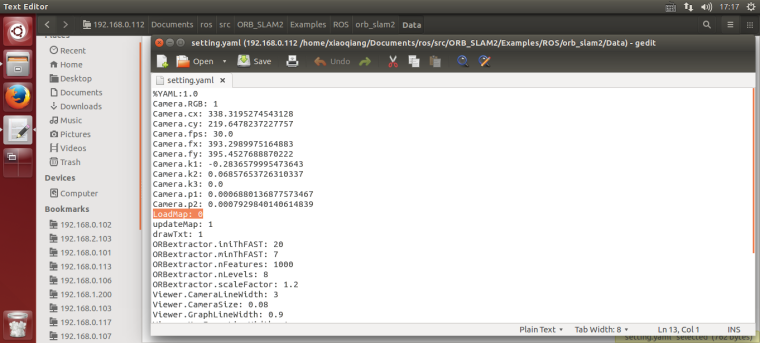

Change the configuration file. The configuration file for ORB_SLAM2 is located in the

/home/xiaoqiang/Documents/ros/workspace/src/ORB_SLAM2/Examples/ROS/ORB_SLAM2/Datafolder. Change the value ofLoadMapin thesetting4.yamland set it to 0. When set to 1, the program automatically loads map data from the/home/xiaoqiang/slamdbfolder after startup. When set to 0, map data will not be loaded. Since we want to create a map, LoadMap needs to be set to 0.

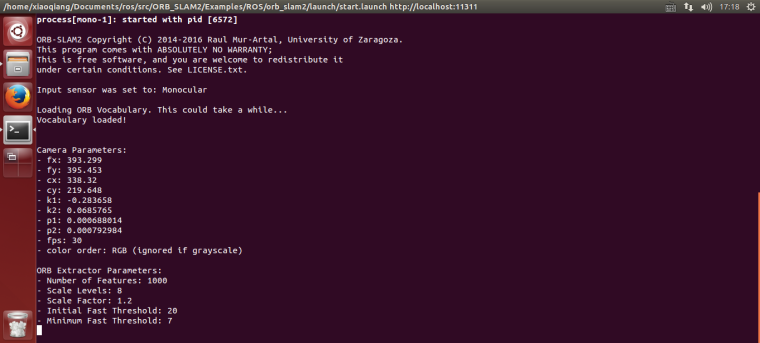

Use ssh to enter Xiaoqiang and execute the following command

ssh -X xiaoqiang@192.168.xxx.xxx roslaunch ORB_SLAM2 map.launch

Began to create an environmental 3D model

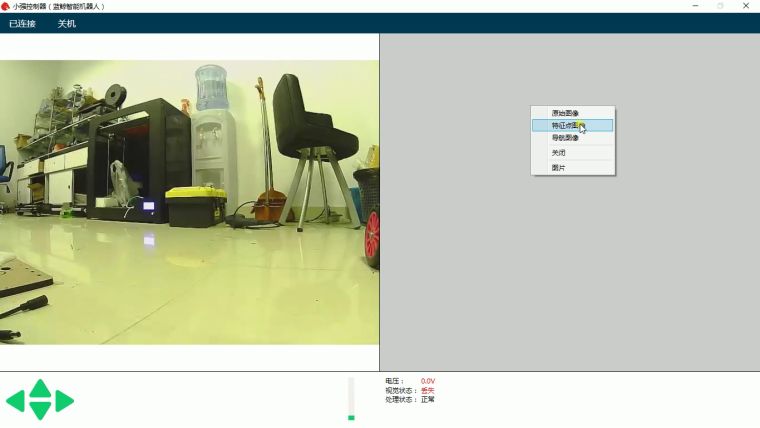

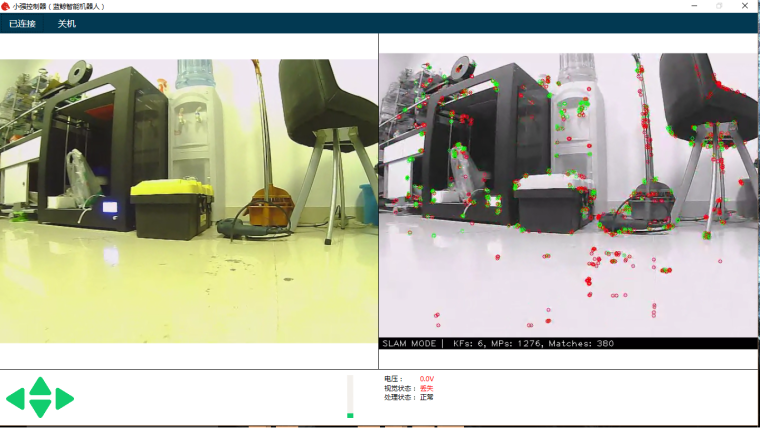

Start Xiaoqiang remote control windows client, click on the “Not connected” button to connect Xiaoqiang. Right-click in the image window to open the “original image” and “ORB_SLAM2’s feature point image”

In the figure above, the left image is the camera’s original color image, and the right side is the ORB_SLAM2 processed black and white image. ORB_SLAM2 has not been initialized successfully in the image, so the black and white image has no feature points. Hold down the “w” key to start the remote control Xiaoqiang, slowly move forward, so that ORB_SLAM2 initialized successfully, that is, black and white images began to appear red and green features.

It is now possible to remote control of Xiaoqiang, and create a map of the surrounding environment. During the remote control process, it is necessary to ensure that the black-and-white image always has red and green feature points. If it does not exist, it means that the visual had lost, and it is necessary to remotely control Xiaoqiang to return to the place where the last missed lost position.

Use rviz to view the map result

Add Xiaoqiang IP address in the local virtual machine’s hosts file, then open a new terminal to open rviz.

export ROS_MASTER_URI=http://xiaoqiang-desktop:11311 rvizOpen the

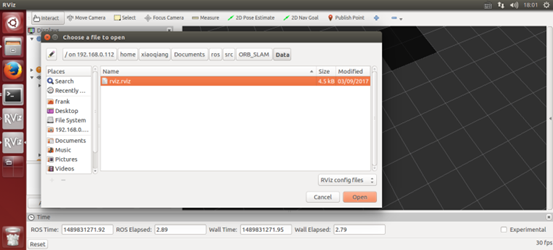

/home/xiaoqiang/Documents/ros/src/ORB_SLAM/Data/rivz.rvizconfiguration file. Some systems may not be able to open this file remotely. You can copy the file locally and open it locally.

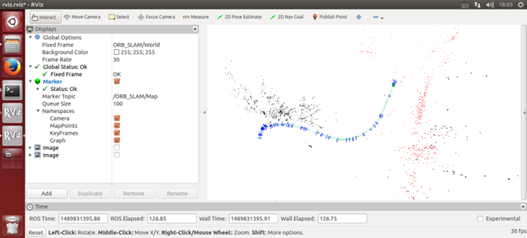

As shown in the figure below, the red and black points are three-dimensional models (sparse feature point clouds) created, and the blue boxes are keyframes that can represent the tracks of Xiaoqiang.

Save the map

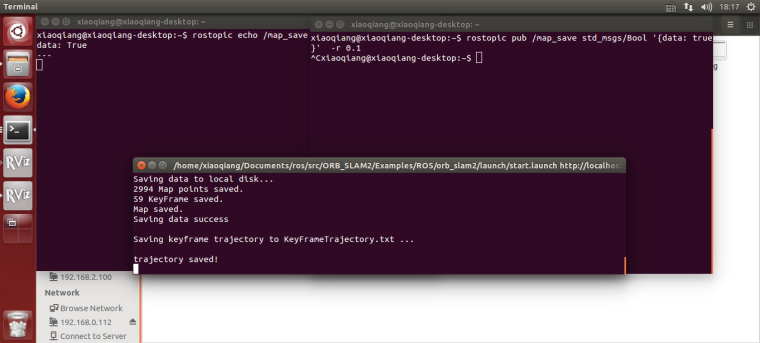

After the map has been created to meet the requirements, start a new terminal in the VM and enter the following command to save the map:

ssh -X xiaoqiang@192.168.xxx.xxx rostopic pub /map_save std_msgs/Bool '{data: true}' -1

The map file will be created in

~/home/slamdb.Loading of maps

You can load the map into ORB_SLAM2 again after saving the map. After the map is loaded, the program can quickly locate the location of the camera. The way to load the map is also very simple.

roslaunch ORB_SLAM2 run.launchThe

Loadmapwas set to 1 by the launch file actually .Post processing of maps

After creating the map, you may want to use this map for navigation, you need to do further operations on the map file. For example, to create a navigation path, and so on. This section can be found in the article Visual Navigation Path Editor Tutorial.

For xiaoqiang users, you can use Galileo navigation client, for more detail, please refer to Galileo navigation user manual

ORB_SLAM2 parameter explanation

The following is an ORB_SLAM2 setting file. The specific meaning of the parameter can refer to the comment.

%YAML:1.0 Camera.RGB: 1 # Camera calibration parameters,the parameters has moved to usb_cam after 2018.6 Camera.cx: 325.0466266836216 Camera.cy: 238.9974356449355 Camera.fps: 30.0 Camera.fx: 395.6779619478691 Camera.fy: 397.4934332366201 Camera.k1: -0.2805598785308578 Camera.k2: 0.06685114307459132 Camera.k3: 0.0 Camera.p1: -0.0009688764167839786 Camera.p2: -0.0002636873513136712 LoadMap: 0 # whether to load map, 1 load and 0 not load updateMap: 1 # whether to update map while running,1 update and 0 not update. drawTxt: 1 # whether to display text in processed video # ORB_SLAM2 slam algorithm-related parameters ORBextractor.iniThFAST: 20 ORBextractor.minThFAST: 7 ORBextractor.nFeatures: 2000 ORBextractor.nLevels: 8 ORBextractor.scaleFactor: 1.2 # ORB_SLAM2 display-related parameters Viewer.CameraLineWidth: 3 Viewer.CameraSize: 0.08 Viewer.GraphLineWidth: 0.9 Viewer.KeyFrameLineWidth: 1 Viewer.KeyFrameSize: 0.05 Viewer.PointSize: 1000 Viewer.ViewpointF: 500 Viewer.ViewpointX: 0 Viewer.ViewpointY: -0.7 Viewer.ViewpointZ: -1.8 UseImu: 1 # whether to use IMU, 1 use and 0 not use. updateScale: 1 # Whether update scale while running, if set 1, the visual scale will be updated according to the data from odom DealyCount: 5 # tf between camera and base_link TbaMatrix: !!opencv-matrix rows: 4 cols: 4 dt: f data: [ 0., 0.03818382, 0.99927073, 0.06, -1., 0., 0., 0., 0., -0.99927073, 0.03818382, 0.0, 0., 0., 0., 1.] # data: [ 0., 0.55176688, 0.83399839, 0., -1., 0., 0., 0., 0., -0.83399839, 0.55176688, 0., 0., 0., 0., 1.] EnableGBA: 1 # whether to enable Global Bundle Adjustment dropRate: 2 # frame skip rate, skip frames to improve performance minInsertDistance: 0.3 # min distance between frames, prevent keyframes from being too dense. Insert only one frame at this distance. EnableGC: 0 # Whether to enable memory recycling, 0 is disable, 1 is enable. Enable memory recycling can make more efficient use of memory, and the relative computational efficiency will decreaseFAQ

Q: Can I create map without the client?

A: Yes, you can. The client is mainly for remote control and video streaming. You can use command line remote control instead. But the client is recommended for better experience.Q: Can I choose not to use IMU

A: The ORB_SLAM2 is an edited version with additional IMU support. If you don’t want to use it, you can set UseImu to 0 in setting.yaml.Q: Where is xiaoqiang’s camera calibration file?

A:the camera calibration file located atusb_cam/launch/ov2610.yamlQ: The camera’s image is not clear

A:It may be that the camera’s lens has been touched, causing it no focus. You can turn the camera lens and adjust the image to a clear position. Note that the camera parameters need to be re-calibrated after adjustment. Calibration methods can be found in how to calibrate camera